Admissions decisions depend on evaluating applicants in a fair, consistent, and defensible way – and the traditional essay is increasingly unreliable for that purpose. Generative AI tools now allow applicants to produce polished written responses with minimal original input, making it difficult to determine what reflects the applicant’s own voice and perspective.

Institutions across disciplines are beginning to adjust. The Association of American Medical Colleges added an AI-specific certification statement to the 2025 AMCAS application, relying largely on applicant self-attestation. At Duke University, admissions leadership stopped assigning numerical ratings to essays after concluding they could no longer assume submissions reflected authentic writing ability. Admissions expert Kevin O’Hara states that if programs cannot confidently determine authenticity, essays may become less central to admissions decisions altogether.

Frontline reviewers are reporting noticeable changes in applicant writing. Former admissions professional Will Geiger notes an increase in formulaic structure and repetitive language characteristic of AI assistance rather than personal expression. The implications extend beyond authenticity to fairness. Applicants with greater access to advanced tools, coaching, and prompting expertise can use AI more effectively, amplifying existing advantages rather than leveling the playing field.

For graduate programs seeking to select best-fit students, this creates a fundamental evaluation gap. When authorship cannot be confidently established, written responses alone provide limited insight into communication skills, critical thinking, or readiness for graduate study.

Programs are turning to direct applicant voice

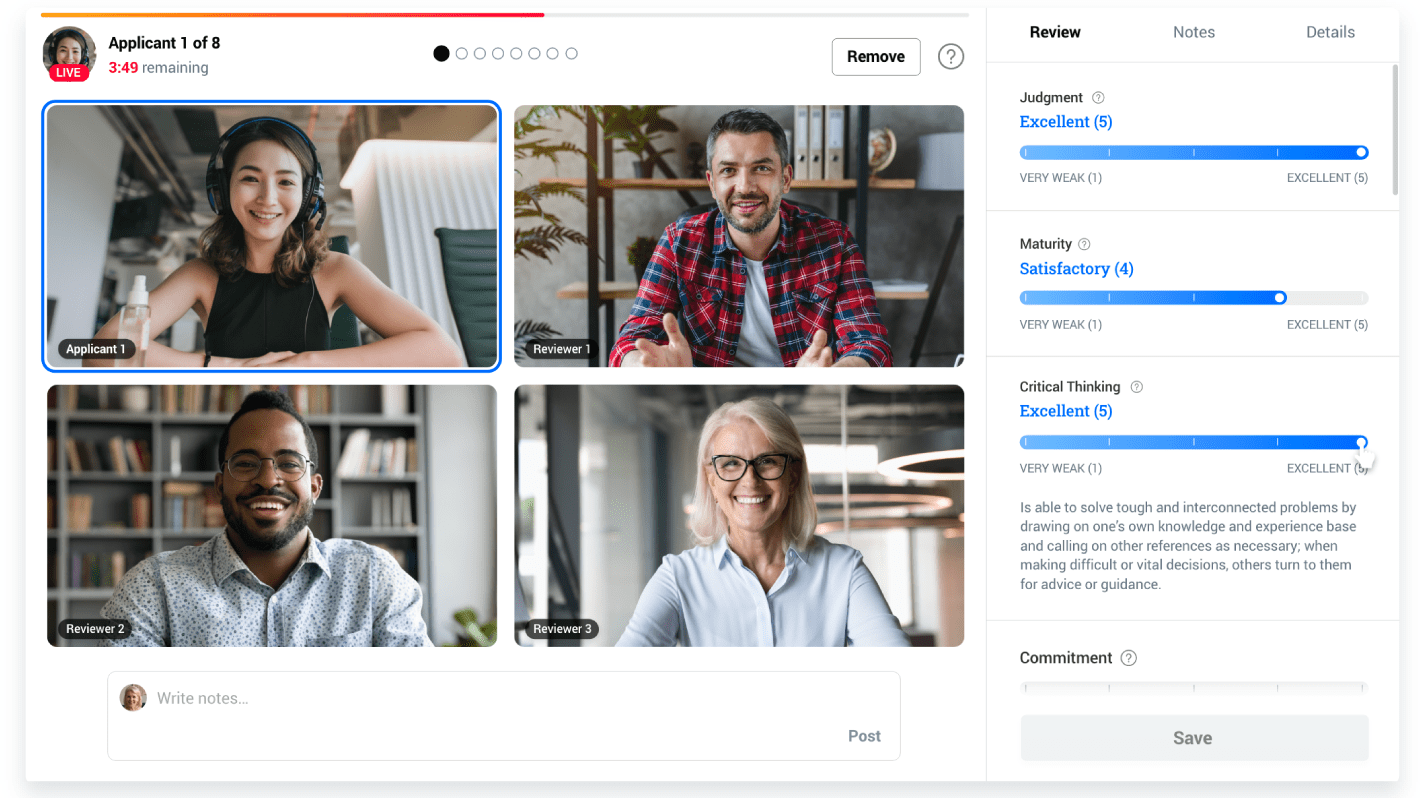

As confidence in written essays declines, many graduate programs prioritize assessment methods that allow applicants to demonstrate their abilities directly. Video assessments are emerging as a practical tool, enabling review teams to evaluate communication skills, reasoning, and interpersonal competencies in a format that is difficult to outsource or heavily edit.

Video is increasingly used as an authenticity layer that strengthens confidence in other application materials. A recent Washington Monthly feature, “AI Is Killing the College Essay. Enter the ‘Video Essay,’” highlighted how institutions are experimenting with recorded responses to better understand applicant readiness.

Admissions professionals consistently value hearing from candidates in their own words. In Kira Talent’s 2025-26 Client Experience Survey, interview performance and GPA ranked as the two most important factors in admissions decisions, underscoring the importance of direct interaction. Structured video assessments, supported by clear prompts and competency-based rubrics, allow programs to gather this insight consistently across large applicant pools while maintaining fairness, transparency, and comparability.

In Kira Talent’s 2025-26 Client Experience Survey, interview performance and GPA ranked as the two most important factors in admissions decisions.

Video enables scale – yet reviewing at scale requires dedicated capacity

Asynchronous video assessment allows programs to gather authentic insight without the logistical constraints of live interviews. Institutions design questions aligned to their priorities, applicants respond on their own time, and reviewers evaluate responses when capacity allows. Because every applicant receives the same prompts and is assessed using the same criteria, programs can maintain consistency across large applicant pools while expanding access and flexibility.

"By using video interviews instead of essays, we gained so much time back. Not just for admissions, but for faculty as well. And students can show us who they really are in a way an essay just can’t."

-Cher Knupp, Director, Health Sciences Admissions at Salt Lake Community College

However, the operational demands of reviewing video responses at scale are substantial. Application volumes continue to rise across graduate programs. In Kira Talent’s 2025-26 Client Experience Survey, more than 55% of admissions professionals reported receiving more applications than the previous year, increasing pressure to evaluate candidates efficiently without compromising quality.

Video review is inherently time-intensive: while reading an essay may take only a few minutes, watching a full set of responses typically requires 15–30 minutes per applicant. For a program receiving 5,000 applications, this represents roughly 1,250 hours of review time – equivalent to more than 150 full working days for a single reviewer.

In Kira Talent’s 2025-26 Client Experience Survey, more than 55% of admissions professionals reported receiving more applications than the previous year, increasing pressure to evaluate candidates efficiently without compromising quality.

Most admissions teams do not have the capacity to absorb this workload during peak cycles without risking reviewer fatigue, delays, or inconsistent evaluation. To maintain rigor at scale, many institutions supplement internal staff with trained professional reviewers who apply the program’s own rubrics and criteria. This approach extends capacity without requiring permanent hires or overburdening faculty, while preserving academic oversight through flexible models such as faculty-plus-professional review teams.

Kira Talent’s Reviewer Services provide access to more than 30,000 trained educational raters, many drawn from ETS’s established scoring programs for assessments such as GRE®, TOEFL®, and Praxis®. Reviewers are trained on each institution’s competencies, calibrated to ensure consistent scoring, and monitored throughout the cycle for accuracy and timeliness. Programs report significant time savings — often hundreds of faculty hours per cycle — while maintaining fairness, reliability, and transparency in decision-making.

"Even with all those extra candidates, we’ve managed to reduce our decision timeframe and get offers out to applicants faster. As a result, we’re seeing record enrollment."

-Larry Fillian, Associate Dean of Enrollment Management and Student Success at NYU SPS

By combining asynchronous video assessment with structured professional review, institutions can capture authentic applicant insight at scale without sacrificing evaluation quality or overloading admissions teams. This integrated approach enables programs to process growing applicant volumes while maintaining the consistency and defensibility required for high-stakes decisions.

Institutions are demonstrating what scalable, fair review looks like

Programs across disciplines demonstrate that high-volume video assessment can be implemented without sacrificing rigor or overburdening staff. By combining structured asynchronous assessments with additional review capacity, institutions manage growing applicant pools while maintaining consistent evaluation standards.

- McMaster University Engineering – conducted a holistic review of 13,000+ applicants scored by Reviewer Services within a five week window

- NYU School of Professional Studies – increased enrollment while saving over 3,000 hours of admissions work per cycle

- Berklee College of Music – streamlined the review of 4,000+ applications, reducing turnaround time to just five days

- Yale School of Management – Replaced standardized English exams with Asynchronous Video and Reviewer Services

- Salt Lake Community College – Scaled holistic review across nine healthcare programs, saving four and a half weeks of staff time

These examples illustrate a common outcome: when review capacity aligns with application volume, programs can move faster while preserving fairness, consistency, and attention to each applicant.

A practical path forward for scalable admissions

For graduate programs, the challenge is no longer simply identifying strong applicants – it is doing so in a way that remains fair, consistent, and defensible as both technology and application volumes evolve. Asynchronous video assessment offers a reliable way to understand how applicants think and communicate, providing insight that is difficult to replicate through AI-assisted written materials.

However, authenticity alone is not enough. Without sufficient review capacity, even the most effective assessment methods can create new pressures on admissions teams. Structured reviewer support ensures that programs can evaluate responses thoroughly and consistently, without overloading staff or delaying decisions.

Kira Talent integrates these capabilities into a single approach: standardized video assessments that capture authentic applicant responses, combined with trained reviewers who apply each program’s own criteria. This approach enables institutions to maintain rigorous standards while adapting to rising volumes and changing expectations.

As the role of traditional essays evolves, programs that prioritize direct interaction, structured evaluation, and sustainable review capacity will be positioned to select their best-fit students while preserving fairness for every applicant.