In May 2018, Jeff Selingo wrote about how schools with high applicant volume need to get creative with their admissions criteria in The Atlantic, citing that half of American teenagers graduate high school with an A average.

The problem affects students on both ends of the academic spectrum: With so many high scoring students, highly competitive schools need to find alternative methods for differentiating the best for their program.

At the same time, extremely talented students who may not have top grades are being overlooked and often forced to pursue a different path or not be able to access higher education at all.

In British Columbia, Canada, Kwantlen Polytechnic University has introduced a pilot program in the hopes of validating a new approach to selecting students: Admission based on competency.

Wait, admissions without grades?

Instead of selecting students based on their grades, a small subset of students in Surrey, BC were evaluated and admitted based solely on their portfolio submissions. These students, who may or may not have had strong transcripts, were enrolled based on their potential shown through work samples, which could be anything from writing samples and visual art to science experiments or musical performances.

Dr. David Burns is an education studies professor at Kwantlen who is currently leading the investigation into how competency-based admissions policies could change the way BC students apply to university. For the students currently in the study, he does not know their formal, academic grades, only their portfolio work.

Burns, and one of his students, Theresa Henderson, were interviewed by Maclean’s Magazine earlier this year.

“It is so important to get to know someone and not just look at their report card and grades and assume they didn’t try,” Henderson told Maclean’s. “It is so nice to get into this [Kwantlen] program to let people know I am not about my grades.”

To learn more about the competency-based admissions pilot at Kwantlen, I was fortunate to speak with Dr. Burns by phone in May about how this program started and what other schools could learn from his experience.

Below is a transcription of our conversation, lightly edited for brevity and conciseness.

MM: To get started, can you give us some background on how the competency-based admissions project began?

DB: The BC curriculum shifted towards competency-based curricula at the K to 12 levels. And that process is about two years away from being fully implemented K-12.

About two years ago, it became pretty clear that there was a very significant need to get post-secondary institutions to take a much more serious look at whether that reform process might continue into the post-secondary system.

And the problem that I started with was that it seemed pretty clear that if the project-based, inquiry-based, competency-based changes were not reflected at the university level then this would essentially disincentivize people from taking it seriously in high school.

The most powerful lever there is to incentivize having people continue to take it seriously and really, really dive into the reforms in the spirit of the change would be to have that reflected in admissions systems because admissions systems are one of those things that gets communicated, and very powerfully of course.

People know when admissions standards change and they’re an important way for us to indicate what we value in incoming students. So the shift from saying, We value your standardized test scores or your classroom grades, to saying, We value what you know and can do is significant. Pedagogically and morally.

But it also exerts a pretty important incentive on the education system as a whole.

Because if you work backwards from the university, then if they start saying we want to hear about your competencies, then grade 12 students start to think differently about how they’re developing and articulating in grade 12, and then parents and schools, so forth, think more about how they’ll support those things so it’s a really healthy and important part of the policy change process.

MM: You’ve started looking at this and you see the value as a researcher, but how did you go about attracting students and teachers and engaging them in this topic?

DB: Well there are a couple of pieces to it. There is the general public communication piece and then there is the really specific piece about the things that I am testing and looking into.

For the things that I am testing and looking into: We started off by partnering with one of the local school districts, for this one it’s Surrey, and collecting a couple of dozen student portfolios that were anonymized and then just looking through them in an exploratory sense to see what students were doing right now in terms of their personal portfolios in grade 12.

We took a look at them and asked a number of questions, but the big one was: “Do these things tell us anything that universities could use to consider admissions?”

So, for that, we found students for it by partnering with a teacher, going out to a school and sending out a study flyer. And then the second phase where we actually brought students in to work with us. And these are the people that are cited in that Maclean’s Magazine piece.

We partnered with the school district and I said to him, my counterpart in Surrey’s schools: “Don’t tell me what their grades are, don’t tell me what their report cards have been like, just bring people that have interesting and creative things to say and do.” That’s it.

And so they brought us a group of students like that and to this day - I’ve been working with them since just before Christmas - I still don’t know any of their actual, formal grades. I’ve just read a bunch of their work and worked on it with them. And so we found those people by asking teachers: Who should we be talking to about this? Who is a good example of human potential that may or may not be really well recognized?

And if you look at the student work that we’ll be publishing soon, there is a whole range of students that you would say have no trouble at all succeeding in the traditional system and students who likely might be challenged in the traditional system because their form of achievement is just a bit different from the one that we typically recognize.

MM: In a University Affairs article you were interviewed in, you mentioned that you had an essay by a student that had several grammatical errors but the content itself was excellent and clearly the student had a ton of potential. Can you speak more to why schools need to be looking for things like that and shifting their perspective to looking at what the student is actually trying to say, and not necessarily the technical way that they’re presenting it?

DB: Well, we have to unpack ‘technical’, right? There is the grammatical sense in which case that’s important but it is not the only thing we should be looking for. And the problem is that people have lots of technical detail in the social-scientific sense.

I believe that essay was a grant proposal so it was technically sound, it talked about democracy and things like that in a technically-sound way. It was just grammatically not technically strong.

And the idea that we would allow the grammatical structure to totally negate the value of the technical content from the discipline is, I think, quite ill-informed, right?

It’s important to people to be able to communicate clearly and we should teach that, but that’s not the only important thing.

MM: Exactly. And I think what made me think of it is, for example, when students are finishing school and they’re going out and applying to jobs. It’s the big red flag of, oh you know, if you have a typo on your resume, they’re going to throw it right out the door. It’s like well is a typo in a resume necessarily the item to judge an entire student’s potential on?

DB: Exactly. The problem is that it’s used as a proxy. It’s used as a cue and then we make all those inferences about other things based on that cue because it’s really readily observable.

You can see typos in a way that you can’t see competencies, at least the way we currently report them.

MM: Exactly. So, on that note, a lot of schools think this all sounds great, in theory, but they’re concerned that evaluating competencies would be too subjective and too open to bias because competency-based criteria aren’t readily available to see. How do you respond to those concerns?

DB: Well it is almost uniformly the case that that is not really what the person means when they say it. I get this question very, very frequently.

And there is a good critique there, but it’s almost never articulated clearly. Because numbers are not objective, on their own. Objectivity is not a property of numbers. The same is the case with grades, which is what people usually mean when they say grades are objective.

I think what they mean is that they are easy to communicate unambiguously. That does not mean that they are an objective, valid, reliable representation of achievement. I can be arbitrary in a way that is totally consistent and that is not somehow more objective.

Because the arbitrariness can come from subjective beliefs. This is kind of how racism works. Racists can be really, really consistent on an arbitrary point.

MM: For a school that is sharing these concerns, how are you building a way to fairly evaluate these competencies?

DB: Two points. The first thing is we have to reteach, sometimes, especially to students, what grades do and what they don’t. Because we do not educate people very well about the limitations of grades.

If you ask the average student what they think the error of measurement is on their grades I can’t imagine them giving a precise answer. Whereas in the educational literature, there’s a pretty large window there for a teacher-created assessment. But we behave as if we know they got 79%, when we almost never do. It’s much more accurate to say they got somewhere between 73%-85%. That would be a strong, relatively reliable test. So we need to make that one clear.

And then for competencies, if we are using the objectivity standard, it makes a more sense, instead of having, let’s say, one biology mark in grade 12, if you take 20 learning outcomes in biology 12, indicating proficiency in all 20 would be much more reliable and objective. There is a more room for error there. Can you generally demonstrate this thing? Yes? No?

Or perhaps on a 3-point scale: Not Yet Proficient, Proficient, or Exceeding Expectation, or something like that. So that’s actually a much more reliable system because it’s not pretending that we have measurement accuracy that we really haven’t had.

MM: Great, thank you, that’s really helpful. When you put it in that context of breaking it down to the different learning outcomes I think that’s something a lot of people can see visually and relate to when they’re trying to wrap their head around how they might do an assessment like this.

DB: One of my rhetorical examples that I kind of always come back to: Imagine you have somebody in grade 12 from a lab course - say a chemistry lab. And they got a 0% on the safety unit because they burnt the lab down. And on the multiple choice exam the week after, they got 100%. The conventional letter grade approach is to throw those things into one bucket and then describe that bucket to you.

If you got 0 and 100, because the multiple choice test was probably weighted more heavily, you end up with a 60% or 70% in that course since those were the only two assessments. Then you go to a university - would the university rather know the 70% or whether or not the student burnt the lab down?

MM: For you at Kwantlen, competency-based admissions is a pilot right now. What does success look like for this pilot?

DB: People get a little bit confused with this because they think that in a year I’m going to say something like "the students were 8% more successful."

We can’t make that claim because it’s not designed in the way to create a generalizable proposition like that. And if it were, it would be unethical, because I can’t watch a student struggle and then fail to support them.

The point is to bring in students, in a diverse but small group, and then to simply prove that our admissions system can accommodate that in a meaningful way. I would then be able to say that that it is impossible or utterly impractical because we would have set up policies and digital systems to do it.

It’s a proof of concept.

MM: Right. So you’re really looking to validate that this can be done.

DB: Precisely.

MM: The other big concern people have, of course, is competency-based admissions would never work because it's too time consuming. What is your response to that?

DB: That applies in cases where you’re expecting the universities or the post-secondary institutions to read all of the portfolios and then attribute the competencies to them. But even if we could do that, in the sense of having the resources to have people read them, it would be a poor idea anyway because we still would not know as much about that student’s broad spectrum of achievements as their teachers did.

So the best system would be to have their competencies validated at the secondary level and communicated to post-secondary institutions. And then in that way, it would be the same amount of time for everybody involved as was before because you already have to assess learning outcomes at the high school level.

MM: Of course, that’s a great approach. This has all been really incredible and informative, but just to wrap up: What would be your overall recommendations for schools reading this post right now and they’re thinking, “Oh wow this all sounds great, I want to get started.” How would you advise someone that’s in that situation?

DB: We’re at a point in the history of public education where we have to ask really fundamental questions that have not been opened up for a long time.

So, returning to first principles, what are we measuring in the first place? Who’s going to use that and try to understand it? What’s the purpose of that measurement? Who are we communicating it to? And so forth. Because we have a lot of systems that are based on assumptions that don’t need to be assumed anymore.

A lot of the grading system is designed for a pre-digital world because it is easy to summarize achievement, write it down on a single sheet of paper, and then mail it somewhere. But my cell phone processes that much data, or the equivalent of that much data for every student in British Columbia, every day, just sending pictures around.

Some of these assumptions, like how much detail can we have, just need to be questioned at the baseline.

So, I would recommend schools start by asking: At your school system, why are you measuring achievement? And what does achievement look like? And then starting from that baseline, and saying, Ok, does our system match our answers? You’re going to find some pretty significant gaps.

MM: Alright, that’s great, thank you so much. I’m going to listen to this over again a couple of times because I feel like I have a lot to think about from what you said, but I’m so excited that we have an opportunity to share a little glimpse into your world with some more folks in higher education.

DB: Well thank you very much.

Looking for more on competency-based admissions?

- Three Types of Competency-Based Assessments in Admissions (And How to Use Them)

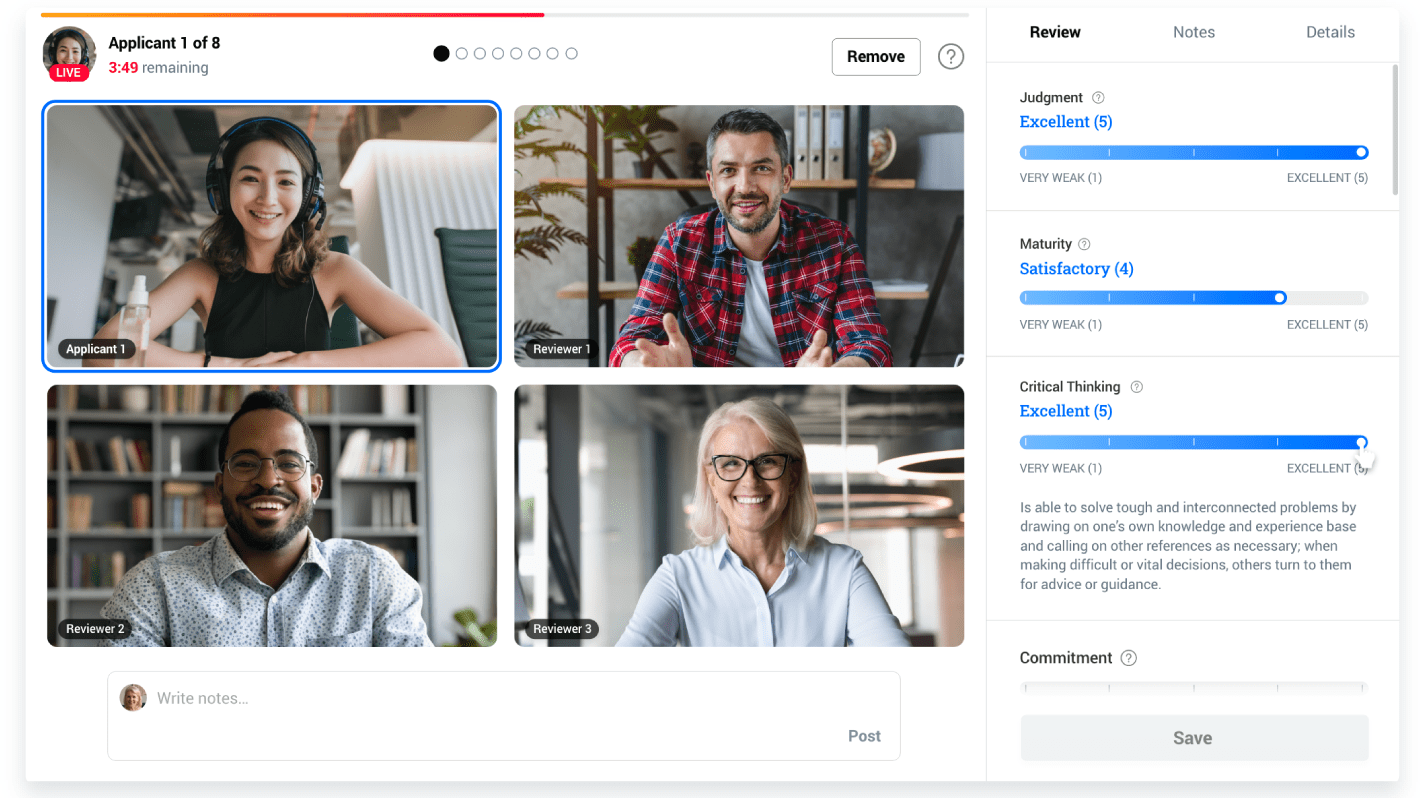

- Schedule a call with Kira Talent about our holistic admissions platform

- Using Co-Curricular Record/Transcript Data to Support Students