Regardless of how you may go about it, admissions can never be completely objective.

But that’s not necessarily a bad thing. Learned experiences help the tens of thousands of admissions professionals around the world identify the best and brightest amongst a pool of applicants. After all, engaging, assessing and admitting tomorrow’s leaders requires more than a computer algorithm with a checklist — it requires that human touch.

The goal, then, is not to train biases out of your admissions team, but to build a process that mitigates the biases that give some applicants an unfair advantage over others.

With that in mind, we sat down with Jamie Young to discuss the presence of bias in admissions and what schools can do to minimize its impact.

Having worked in higher education admissions for over 12 years, Jamie Young has brought his insights and expertise to Kira Talent over the past three years. In his role leading the Client Success team at Kira, Jamie sees first-hand how schools around the world are addressing the challenge of bias mitigation in admissions — often when time and budget are limited.

We asked Jamie to share what he has learned from his experiences as the Director of Recruitment and Admissions for the Full-Time MBA at Rotman School of Management, and the Associate Director of Admissions and Recruitment at the Sauder School of Business. Below you’ll find suggestions for how your school can optimize the practice of addressing bias in your admissions process.

Discover:

Why bias mitigation is so important

How Kira helps mitigate admissions bias

The nine forms of bias in admissions

- Groupthink effect bias

- Halo effect bias

- Confirmation bias

- Ingroup bias

- Conservatism bias

- Bizarreness effect

- Stereotype bias

- Status quo bias

- Recency bias

Want to take it home? Download the how-to guide to reducing admissions bias at your school.

Why is bias mitigation so important for admissions teams today?

Jamie Young (JY): When we as admissions professionals consider the landscape that’s in place today there seems to be a spectrum. On one end there are the traditional admissions processes that rely on grades and standardized tests as measures to explicitly determine admissions and scholarships or financial aid decisions. And on the other end, there is pioneering work being done with artificial intelligence (AI) (or at least machine learning algorithms) to help find a better way to address the human selection challenges.

Every admissions team I’ve worked on or with seems to fall somewhere on that spectrum, and regardless of their process, each have their unique obstacles to navigate when it comes to mitigating bias. That’s why I think that teams being aware of the different biases that can occur and how they can be mitigated within their specific admissions process is the first step to invoking real change.

Is my admissions process biased?

Take our quick assessment to diagnose the potential areas in which bias can occur in your admissions process. Your assessment will remain completely anonymous.

How does Kira help mitigate common biases that manifest in the admissions process?

JY: Over the last nine years, Kira has worked with hundreds of admissions teams to identify and address biases in their admissions process. We’re focused on mitigating the biases that show up anywhere along that spectrum. For some schools that means adding timed video or timed written responses to augment their existing holistic admissions practice, for others it’s adding a new component to a one- or two-dimensional process that provides new and important insights.

In order to help schools understand and identify how bias may be affecting their admissions decisions, we published the Breaking Down Bias in Admissions eBook that outlines the nine most common forms of admissions bias. We’ve used this research to guide our development of the products and services that continue to help our clients reduce biases in their processes. Although it’s not possible to remove all biases from the admissions process, by making it a focus of our product development, we can help ensure that our partner schools and their applicants have a fair process.

Below Jamie outlines nine common forms of bias, gives some examples as to how and when they may occur in the admissions process, as well as how the Kira platform can help mitigate these biases.

Groupthink effect bias: When members of a group set aside their own opinions, beliefs, or ideas to achieve harmony.

What this looks like: The groupthink effect comes into play when reviewers are visible or communicative with one another during the scoring and decision-making processes. From commentary to facial expressions, reviewers can unintentionally influence one another’s decisions when they’re in the same room or on a video call together.

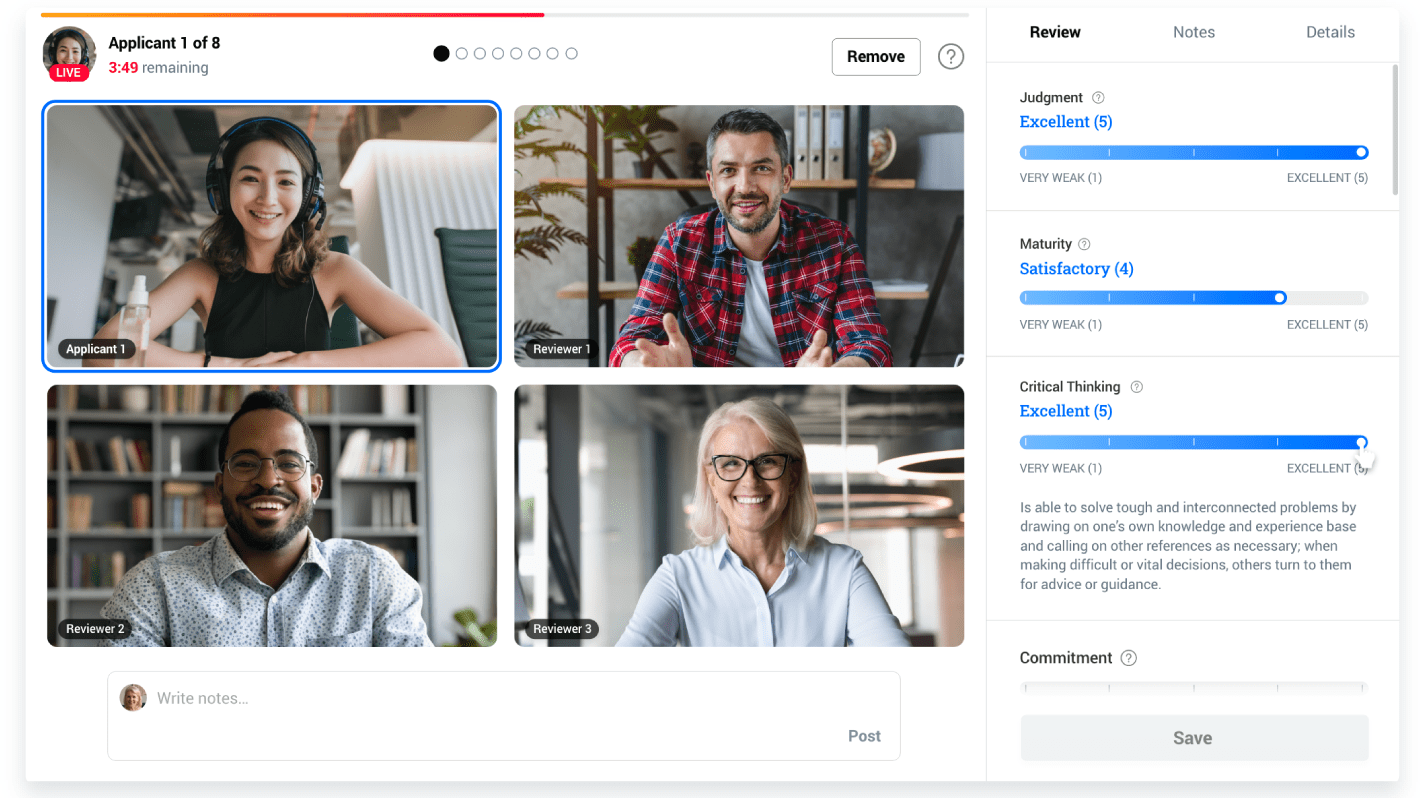

How Kira helps: Kira’s platform requires reviewers to assess applicants independently. Although administrators can see all applicant data, reviewers cannot view other reviewers' scores or comments and don't know which reviewers are assigned to which applicants. In this way, Kira removes the possibility of any communication that may influence a reviewer’s rating.

Halo effect bias: When one remarkable quality influences other factors in a decision.

What this looks like: Whether it’s one very high test score, a compelling story, or a strong reference, halo effect bias makes it so easy for that one excellent quality to overshadow any flaws in an application. Conversely, if an applicant’s first response in their interview misses the mark, that negative impression can subconsciously spill over into subsequent scoring.

How Kira helps: Both Kira’s Asynchronous Assessment and Live Interviewing have built-in tools that allow teams to engage more reviewers in the process by eliminating travel and enabling them to review when and where is most convenient for them. By gathering multiple, independent evaluations of each applicant, this process reduces the potential impact that any one reviewer has on an admissions outcome.

In an Asynchronous Assessment, Horizontal Review mitigates the halo effect by assigning reviewers to assess a wider pool of applicants for a specific competency.

In Live Interviewing, which was built to host a Multiple Mini Interview (MMI), applicants move through several stations, each with different reviewers and a different question or prompt.

This structure fundamentally addresses the halo effect bias, as stations and competencies are scored independently from one another, preventing previous responses from influencing scoring for a later response.

Confirmation bias: Seeking, interpreting, selecting, or remembering information in a way to support an existing belief or opinion.

What this looks like: A glowing recommendation letter, a degree from a prestigious school or work experience at a well-known company can be interpreted as signals of a strong applicant. However, it can often happen that factors such as these prompt reviewers to prematurely reach the conclusion that the applicant is a good fit for their program and then subconsciously only look for other factors in their application that supports that conclusion. The struggle with confirmation bias is that, fundamentally, all humans like to be right.

How Kira helps: Gathering multiple, independent perspectives on an applicant is one of the most effective ways for schools to mitigate confirmation bias. Kira makes this easy by offering a flexible and efficient reviewing process that enables multiple reviewers to assess each applicant, and for each reviewer to assess a greater number of applicants by adding the convenience of being able to review applicants where and when is most convenient.

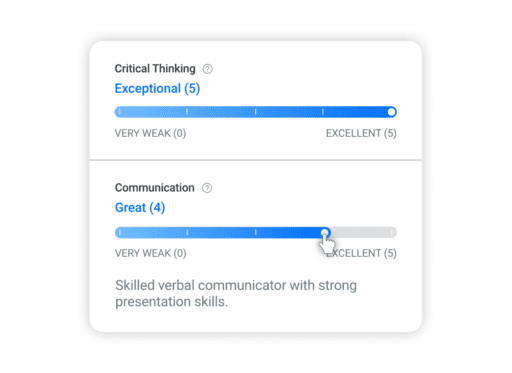

Kira’s structured review process that includes custom rubrics and rating scales built into the platform also helps reduce confirmation bias. By focusing each response towards assessing a specific competency, admissions teams can help ensure that subsequent scores are focused on that competency and not a reflection of a previous part of the applications that made an impression. Kira further supports this effort by plainly showing the custom descriptions of what each score for a specific competency looks like. By having a defined rubric, clearly visible at all points of the interview, Kira’s structured review helps mitigate the impact of biases.

Ingroup bias: Giving preference to a person or organization that aligns with one's own group.

What this looks like: Ingroup bias often shows up around the edges of an interview, influencing the way questions are asked or some of the informalities which end up creating an uneven experience for applicants.

Imagine you’re evaluating an application and you learn that an applicant went through the same undergraduate program as you or they grew up in the same city, or they are also a single parent. This connection not only makes them stick out in our minds, affecting later discussion in admissions committee meetings, but it can change the way we interact with them in an interview by making us act a little warmer or dig a little deeper for a great response.

How Kira helps: With in-person interviews, reviewers often start with small talk in order to put the applicant at ease and segue into the evaluation. Although these conversations may seem benign, they can unknowingly precipitate ingroup bias. Pre-recorded question videos in Kira’s Asynchronous Assessments ensure that every applicant is getting exactly the same experience.

Whether your team is using our Asynchronous Assessment or Live Interviewing, Kira’s Horizontal Review can reduce the impact of ingroup bias behind the scenes by helping you engage multiple reviewers in scoring each applicant thereby reducing the impact of one reviewer scoring higher because of a shared connection.

Whether your team is using our Asynchronous Assessment or Live Interviewing, Kira’s Horizontal Review can reduce the impact of ingroup bias behind the scenes by helping you engage multiple reviewers in scoring each applicant thereby reducing the impact of one reviewer scoring higher because of a shared connection.

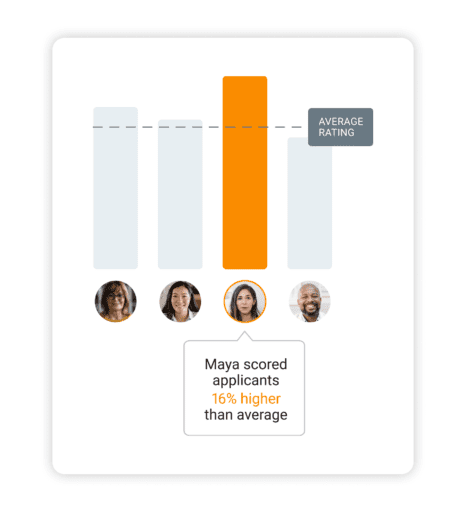

And with Kira’s inter-rater reliability dashboard, any significant deviation in scores across reviewers will be flagged.

Conservatism bias: Maintaining a prior viewpoint without adjusting for new information or evidence.

What it looks like: Conservatism bias can occur when a reviewer receives new information about an applicant – like a glowing employer reference or excellent interview performance – but that information gets mentally distorted by the reviewer in order to maintain their initial opinion of the applicant formed from their below-average test scores and mediocre transcripts.

How Kira helps: Reviewers can know that they are assessing for a competency such as empathy and have a previously conceived notion of what empathy is and what it should look like in an interview setting.

Kira helps mitigate this by using defined rubrics and rating scales that clearly lay out which competencies you’re evaluating applicants for, and the definitions of each score on the rating scales.

Kira’s inter-rater reliability dashboard can also help identify scoring patterns amongst reviewers. Flagging any reviewer who scores applicants significantly higher or lower for one competency, and opening the door for that reviewer to receive clarification or more training.

Bizarreness effect bias: Recalling only unusual information in a series of facts or details.

What it looks like: People with unusual experiences or stories tend to stand out in our memories.

Imagine two candidates with identical resumes, competencies and skillsets apply to your program. They both speak Japanese, but one learned it through a summer program in Japan during which time they climbed Mount Fuji, and one took intro classes at their local language school. The first applicant would make a greater impression compared to the other candidate, making us remember them in more detail.

In admissions, this Bizarreness Effect not only affects the consistency with which we score applicants, but it also presents a challenge with inequity as, in many cases, the bias favours applicants from higher-income families who have the resources to support these unique experiences.

How Kira helps: Where traditionally reviewers take notes during an interview and complete their scoring after, Kira’s in-app rubrics allow them to score applicants in the moment. With easy-to-use scoring in the Kira platform, reviewers can seamlessly evaluate applicants during the interview (with Live Interviewing) or while watching the video (in an Asynchronous Assessment), helping make their decisions based on all the relevant information, not just the pieces that remain in their memories hours later.

Kira’s inter-rater reliability dashboard can also help mitigate the bizarreness effect from impacting the admissions process. By flagging significant deviations in scores between reviewers, the dashboard helps admin monitor for possible biases.

Stereotype bias: An oversimplified understanding of a particular type of group, person, or thing.

What it looks like: Stereotyping is one bias that most of us are familiar with but often don’t realize when it’s affecting our own judgement.

Perhaps it’s that graduates from certain prestigious universities are more studious, or that people who come from an arts background are more laid back than people who have worked in the finance industry.

Regardless of whether it’s a positive or negative association, stereotypes about an applicant’s race, gender, age, or academic experience, can unknowingly trigger assumptions about that applicant which can unfairly influence the admissions process.

How Kira helps: This is where Kira’s ability to easily engage multiple, independent reviewers in the assessment process makes a significant difference. By increasing the number of reviewers that evaluate each applicant, Kira helps ensure that one reviewer’s evaluation can’t make or break an application thereby reducing the potential impact of bias.

Status quo bias: Having an aversion to change or an emotional attachment to the current state of being.

What it looks like: This form of bias occurs when we fear the possible risks of the unknown and discount the benefits because of the fear or discomfort. In admissions, this can manifest as sticking to ‘traditional’ review methods, such as not updating admissions principles, or sticking to the same metrics that don’t necessarily prove the applicant’s success in the program.

How Kira helps: Kira helps teams make that leap in a supported, structured, and validated way.

Our dedicated Client Success Managers (CSM) are on-hand to guide you through every step of the process and make sure that the experience for both yourself, your reviewers, and your applicants is as seamless and professional. By helping your team define the competencies that you want to assess applicants for, and updating existing or creating new rubrics to get to the heart of those competencies, your dedicated CSM helps ensure that your questions and rubrics are truly evaluating for that skill, and not just supporting the status quo.

By constantly evolving our own platform with the goal of making admissions more efficient, effective, and fair, Kira helps ensure that your process is growing to meet best practices in education admissions.

Recency Bias: Assigning more weight or importance to a recent event or interaction than others in the past.

What it looks like: Imagine a reviewer is interviewing several applicants back to back. They’re more likely to have a detailed memory of the applicant they interviewed at 4 p.m. over candidates they saw earlier in the day. The reviewer may remember the applicant in the 3 p.m. interview explaining their work experience, but when looking back at the interview, the reviewer will likely give that candidate’s awkward handshake at 4 p.m. more emphasis.

How Kira helps: When it comes to recency bias, Kira helps minimize the impact by having reviewers score applicants in the moment.

The platform ensures that interviewers complete their review during or directly following their time with the applicant with structured in-platform rubrics. In this way, we’ve removed the tendency to – no matter how good their intentions are – ‘finish this later’ and inadvertently open the door to the influence of recency bias.

Kira’s Asynchronous Assessment is also great for minimizing this form of bias. It doesn't matter if an applicant completed the assessment at 10 AM or 4 PM because, unlike synchronous interviews, viewing applicant responses and scoring applicants happens whenever and wherever is best for the reviewer.

I know we’re just scratching the surface on bias in admissions here. What haven’t we said that you want to share with admissions teams?

JY: I think one of the parting notes here is that there probably isn’t ever a perfect time to think about process change. For the schools or individuals who are trying to figure this out, it’s helpful to consider Kira as an investment in a continuous improvement of their process. Start where you are, we’ll add system and structure and bring in the best practices. From there, we’ll have an established baseline and can continue to leverage our technology to improve processes and outcomes for you and your teams.