Each year hundreds of thousands of students step up to bat in the college admissions game. They compile their transcripts and test scores, prepare for their interviews, meet-and-greet with administrators, and wait, fingers crossed, for decision day.

Most of these applicants, however, will have their application swayed by some form of review bias impacting their evaluation.

The team at Kira started researching bias in admissions because we believe every student deserves a level playing field on the road to college. This year, we assessed the admissions process of 145 programs in dozens of faculties at schools around the world to understand how they review their students.

We learned that although 97 percent of schools agree on the importance of fair, defensible admissions, many of their processes tell a different story. Less than half (47 percent) believe bias could be a factor in their own admissions process.

We also learned that schools aren’t talking about bias enough: 48 percent of admissions teams admit that in a given year, they only talk about the impact of bias ‘sometimes,’ ‘rarely’ or, at worst, ‘never.’

But they know it’s an issue. 70 percent of respondents believe that bias is a factor in admissions decisions at other schools, while 26 percent did not state an opinion on the topic.

It’s important to understand the context around admissions bias.

Bias is an inevitable part of our decision-making process and it exists in all areas of life, including the admissions process. Rather than turn a blind eye, schools need to start talking about it. From there, they can learn how to fix it.

This isn’t one person on an admissions committee who favours one gender or race, a certain hair colour or eye colour. Admissions bias rises out of a combination of issues that stem from inconsistencies in the admissions process.

Here are five critical observations we gathered from this study:

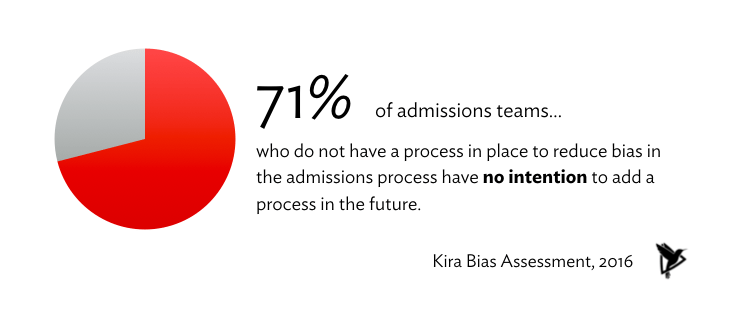

Many schools do not have any plan or process to reduce bias

Despite 97 percent of schools stating they believe in a fair admissions process, we were surprised to find that 41 percent of schools have no process in place to reduce bias in the admissions process, and, of those schools, 71 percent have no intentions to add a process in the future.

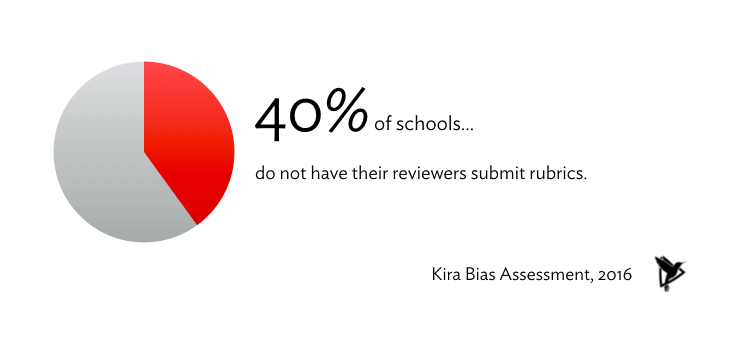

Many schools do not keep a paper trail for admissions decisions

40 percent of schools do not have their reviewers submit rubrics explaining their evaluations. On top of that, only 70 percent of schools using rubrics are confident that every member of their admissions team follows their standardized criteria.

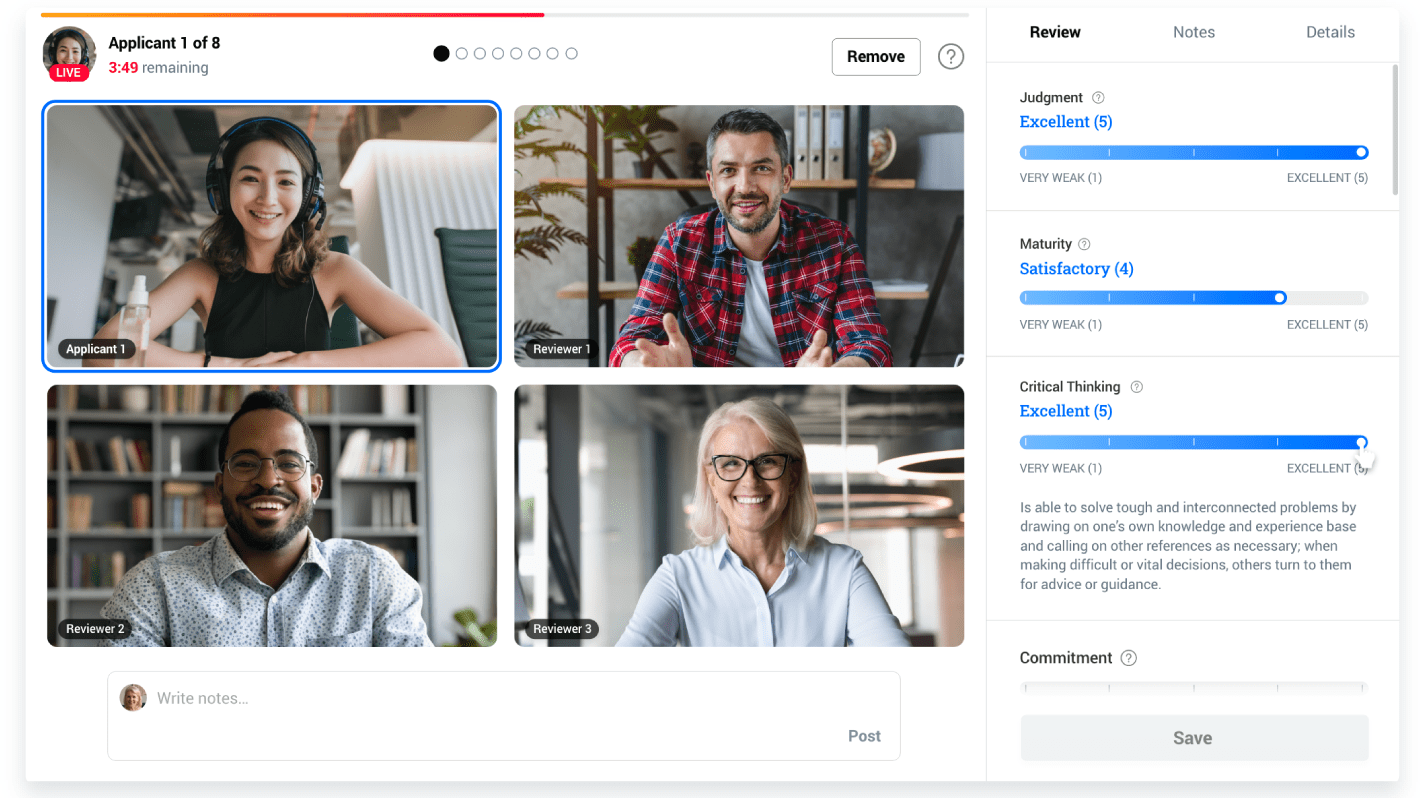

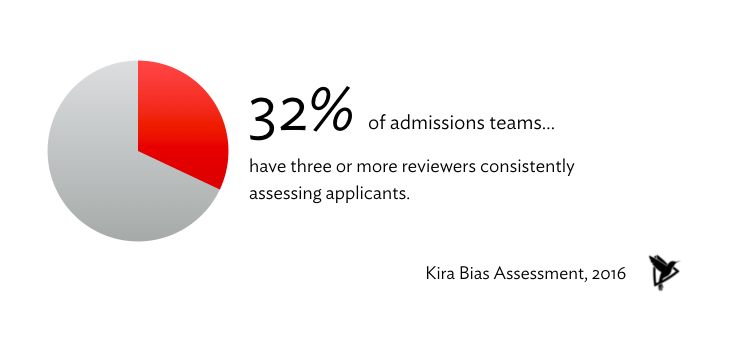

One third of schools have an ideal, consistent 'three or more' reviewer system

40 percent of schools have a variable number of application reviewers for each applicant, meaning some candidates get one set of eyes, while others get three. Fortunately, 32 percent of schools have three or more reviewers consistently assessing applicants, which is ideal for getting a variety of opinions.

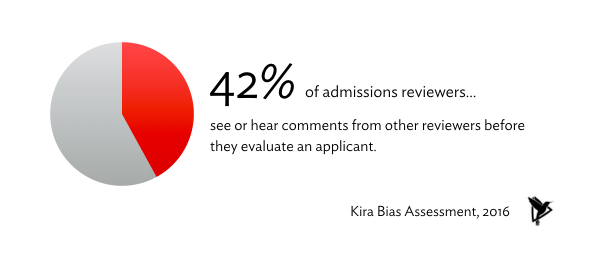

Many reviewers may be biased by their colleagues' feedback before assessing an applicant

When evaluating an applicant, 42 percent of reviewers see or hear the feedback of their colleagues before they have a chance to review or meet the applicant themselves. By sharing feedback before evaluations have been completed, reviewers can create groupthink by unknowingly biasing one another to favor or not favor an applicant, rather than letting colleagues come to their own decision based on admissions criteria.

Decision fatigue is a likely factor for bias on many teams

41 percent of schools reported their interviewers express that they are fatigued during review season. When admissions professionals are overworked or under-supported, it creates a myriad of possible oversights born out of either decision fatigue, physical exhaustion, or lack of time to focus on details.

How did schools score overall?

Of the 145 schools who completed the assessment, we scored each participant with a letter ‘grade’ from A to E, based on their responses. Both the median and mean score was C+, representing a likely risk of some admissions bias, due to some inconsistencies in the review methods and criteria. Only 0.7 percent of schools qualified for a ‘perfect’ A+ score on the assessment. Any participants who answered with several “I’m not sure” responses were given an inconclusive result.